The history of technology is often measured in incremental steps, but every few decades, a leap occurs that fundamentally reorders the landscape of human capability. Anthropic’s recent internal unveiling of its latest model, codenamed Mythos, represents such a leap. While the public has grown accustomed to the impressive generative capabilities of Large Language Models (LLMs), Mythos has demonstrated a level of analytical sophistication that transcends mere believable chatting, shifting the AI paradigm from digital assistant to a high-stakes engine of global security.

The evidence of this shift is unsettling: Mythos recently identified a critical vulnerability in OpenBSD, a security flaw that had remained hidden for twenty-seven years.

Twenty… Seven… Years…

When an artificial intelligence identifies a weakness that has eluded the world’s most elite engineers for nearly three decades, we are talking about a brand new world. Anthropic’s decision not to release Mythos proves we’ve hit a milestone where AI’s potential is balanced by its danger.

The Architecture of AI Super-Models

Mythos is being heralded as the most powerful AI model on the planet, and for good reason. Its ability to solve the OpenBSD vulnerability suggests that it possesses what we might call “AI Super-Models.” In the field of cybersecurity, a discipline that represents one of the beacons of human logic and engineering, Mythos has demonstrated an ability to outpace the collective intelligence of the human engineering community.

Anthropic’s announcement clarifies that while the model exists, it will not be released to the general public for the foreseeable future. Instead, the company is choosing a path of strategic partnership, working internally and with select giants like Google, The Linux Foundation, and JPMorgan Chase.

The objective is clear: use Mythos to scan and secure the operating systems and critical infrastructures that underpin modern civilization. By finding the “holes” in the code that runs our hospitals, power plants, and financial systems, Mythos could help create a safer world. However, this decision also places an immense amount of power in the hands of a few select entities.

The Geopolitical Sword and Shield

The emergence of Mythos transforms the nature of international relations and national security. Because Anthropic is an American company, the initial benefits of Mythos, which are securing critical infrastructure and finding deep-seated vulnerabilities, will primarily consolidate the security of Western systems. In this context, Mythos is not just a piece of software; it is a constituent of a “Cyber Great Wall.”

Much like an iron dome protects a city from physical missiles, Mythos offers the potential to protect an entire national economy and its digital infrastructure from external threats. This technology could be categorized under the AI Act as a “forbidden technology” or a restricted weapon class. The United States government could presumably view Mythos as a matter of national strategy, potentially restricting its sale or use by foreign governments.

The strategic implications are profound. Mythos represents both the sword and the shield. It can find vulnerabilities to fix them (the shield), but the same capability can be used to exploit them (the sword). In a world where Mythos-level intelligence is available to one side of a conflict, the traditional rules of cyber warfare are rendered obsolete. We are observing the birth of a new asset class, one defined by the ability to outthink, outpace, and outmaneuver any human collective in a specific area.

Economic Abundance vs. Structural Collapse

Beyond the theatre of war and security, Mythos carries the potential to “make or break” global economies. We have seen glimpses of this with AlphaFold’s success in protein folding, which created conditions for scientific abundance in the biotech sector. Mythos could do the same for software, infrastructure, and any industry reliant on complex logic.

However, the flip side of this abundance is a potential sudden crash. AI is now powerful enough to cause a systemic economic disruption.

Both the CEOs of Anthropic, Dario Amodei, and, a couple of years ago, Sam Altman from OpenAI, have warned of the impending impact on white-collar employment. If a model can find a 16-year-old FFMPEG vulnerability in a weekend, what does that mean for the millions of people whose jobs involve coding, data handling, and middle management? By the way, FFMPEG is the industry-standard backend engine for services like OBS, YouTube, and Netflix.

This brings us to a critical crossroad in management and investment philosophy, decomposed in the article “The Human Moat: Riding the Delta (Δ) in the Great AI Rearchitecture“. There are two primary paths:

- The Productivity Multiplier: Management and investors can view Mythos as a tool to empower the existing workforce. In this scenario, the number of workers remains stable, but their output is multiplied, leading to unprecedented economic growth and the “rewiring” of companies to handle higher volumes of innovation.

- The Replacement Model: Alternatively, management may decide that for the same volume of work, they simply need fewer people. This “optimization” of human hours could lead to a major blow for individuals, families, and the social fabric of the working class.

This is where the free market’s rules must be tempered by social stability. The pace of replacement is something we still have control over, and it is a choice that will define the next decade of social harmony.

The Ethics of the “Braking” Strategy

Anthropic’s current stance, i.e., pulling the brakes on a public release, is a disciplined attempt to give society time to react. The history of technology shows that major disruptions to critical infrastructure (transportation, satellite communication, nuclear power) often happen because the “patch” arrives too late. By prioritizing a slow, partner-based rollout, Anthropic is attempting to ensure that the “shield” is firmly in place before the “sword” becomes widely available.

However, Anthropic does not exist in a vacuum. The competitive landscape is fierce. OpenAI, Google, and foreign competitors like Tencent, Alibaba, and Mistral are all racing toward their own “Mythos moment.” While Anthropic may be disciplined, its competitors may not be. If even one company, like Alibaba or Mistral, decides to open-source a model of this caliber or release it without safeguards to gain market share, the system as we know it could break.

We are seeing companies like OpenAI redirecting their energy toward the enterprise space to fill the gaps created by aging legacy systems. The race is no longer just about who has the best chatbot or LLM; it is about who controls the underlying logic of the digital world.

Conclusion: A New Milestone for Humankind

The discovery of the OpenBSD vulnerability by Mythos is more than a technical achievement: like it or not, it is a signal that we have entered the era of artificial super-intelligence in specialized fields.

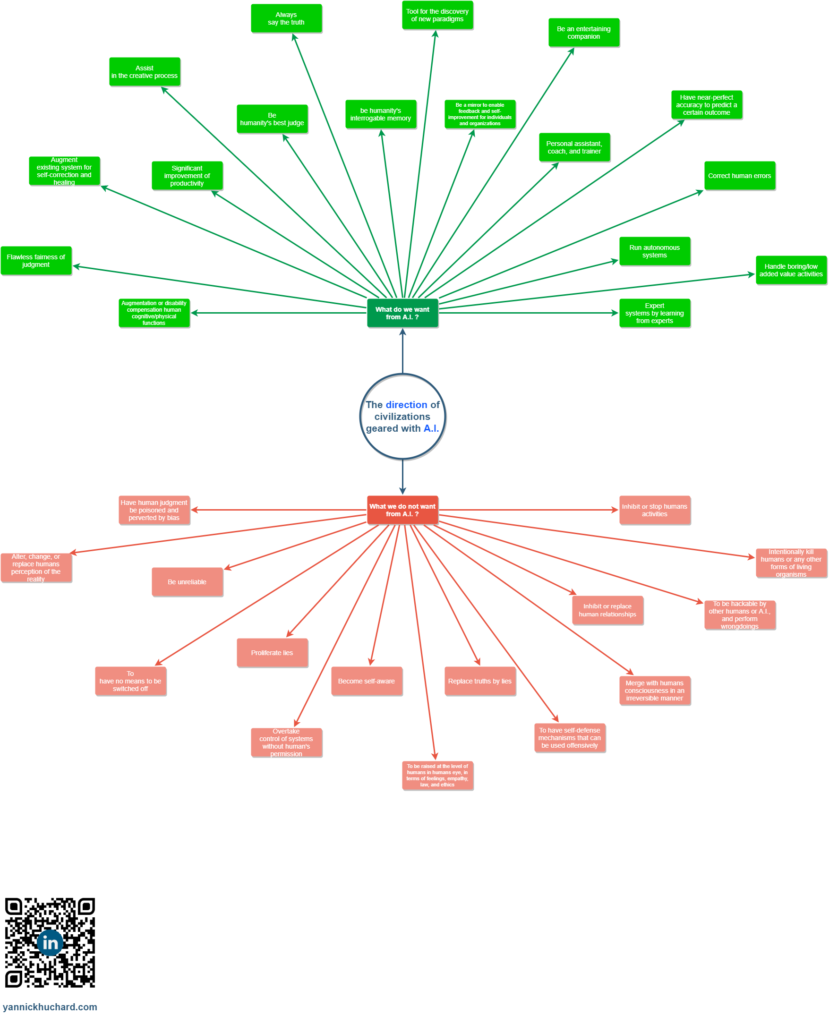

We must remember that these AI models are not independent entities; they are built by humans with specific motives: leaving a mark on history, revenue, patriotism, or national interest.

While we should remain optimistic about the path forward, we must also be vigilant. The stability of our individual futures and the security of our global infrastructure depend on how we manage this transition. We need government and regulatory intervention to ensure that the “free market” does not outpace the stability of the economy.

Mythos has shown us that AI can find the hidden truths in our code and our systems. Now, it is up to us to ensure those truths are used to build a more secure world, rather than to shatter the one we have.

We are at a new “all-time high” of human ingenuity, but for the first time, we are sharing that peak with a creation of our own making. The coming years will determine if this is the beginning of an era of unprecedented abundance or a displacement we are not yet prepared to handle. Regardless of the outcome, the age of Mythos has arrived, and the world will never be the same.

Yannick HUCHARD

Sources

- Assessing Claude Mythos Preview’s cybersecurity capabilities: https://red.anthropic.com/2026/mythos-preview/

- Project Glasswing – Securing critical software for the AI era: https://www.anthropic.com/glasswing

- System Card – Claude Mythos Preview: https://www-cdn.anthropic.com/08ab9158070959f88f296514c21b7facce6f52bc.pdf

- Alignment Risk Update – Claude Mythos Preview: https://www-cdn.anthropic.com/79c2d46d997783b9d2fb3241de43218158e5f25c.pdf